Robots learn by imitating other robots

Chongjie Zhang, collaborators find a solution to train a team of robots

Robots are increasingly being used in manufacturing, agriculture and health care. But programming a team of robots to carry out individual tasks raises questions: how can robots learn from other robots even if they are built differently?

A multi-institutional team, including Chongjie Zhang, associate professor of computer science & Engineering in the McKelvey School of Engineering at Washington University in St. Louis, developed a new method that enables robots to achieve intentions shown by their peers. The method, called Intention-Aligned Imitation Learning (IAIL), is inspired by human cultural learning. Results of their research were published March 18, 2026, in Science Robotics.

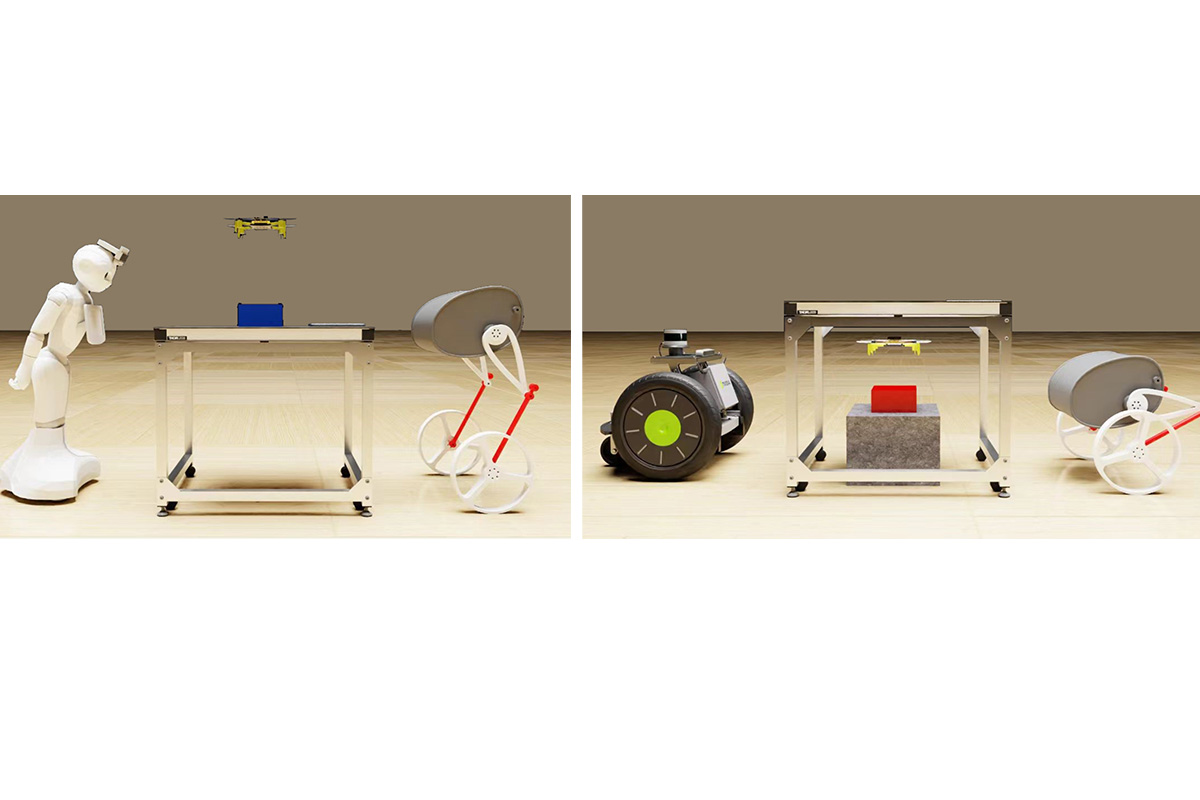

Traditional methods usually require the robots to have similar physical capabilities and conditions as the demonstrator, which makes it hard for robots to adapt to different environments or work with robots that have different designs. The team’s solution is IAIL, which uses high-level intentions, or goals, described in natural language to align and adapt robot behaviors. It also allows robots to understand the purpose of actions and apply them in various situations, even with different physical designs.

The team tested IAIL with seven different robots in 30 different scenarios, which showed the methods’ ability to adapt behaviors across different robots and tasks and allowed for multi-robot teamwork.

“Rather than reproducing low-level motor actions or embodiment-specific features, our approach contrasts and associates behaviors according to their task objectives, which supports both agent-to-agent and team-to-team transfer,” Zhang said.

An intention is the goal or result that a robot aims to achieve through an action. It is described using human language, which helps separate the specifics of how the action is controlled or physically performed. The team created a shared intention space by aligning motion embeddings with their corresponding annotations, which allowed similar intentions to be measured among robots with different designs and capabilities. The learning robot then retrieves the most demonstration-relevant behavior from its pre-trained repertoire by matching intentions. This method also works with teams of robots, the team said.

Zhang said their method is inspired by human cultural learning mechanisms, where individuals understand the actions of others and express them through their own behavior. It is also in line with cognitive science, which shows that humans who learn prioritize reproducing a demonstrator’s inferred goals over their exact movements, as well as with neuroscience, which shows that humans interpret behavior intentionally rather than by imitating movements.

“Our linguistic approach offers a complementary and robust perspective, providing a shared, high-level code for intention that bridges embodiment gaps where direct visual or motion correspondence is unreliable,” Zhang said. “By organizing robot behaviors in a shared intention space aligned with descriptors humans can understand, IAIL supports legibility and predictability, which plays to our favor in collaborations, particularly with humans.”

Chen X, Gao Y, Liu H, Yang F, Ghadirzadeh A, Yang J, Liang B, Zhang C, Lam TL, Zhu S-C. Cross-Robot Behavior Adaptation through Intention Alignment. Science Robotics, March 18, 2026. DOI: 10.1126/scirobotics.adv2250.