Boundaries of agricultural fields worldwide now publicly available

Nathan Jacobs, collaborators launch model that could transform agriculture, food security

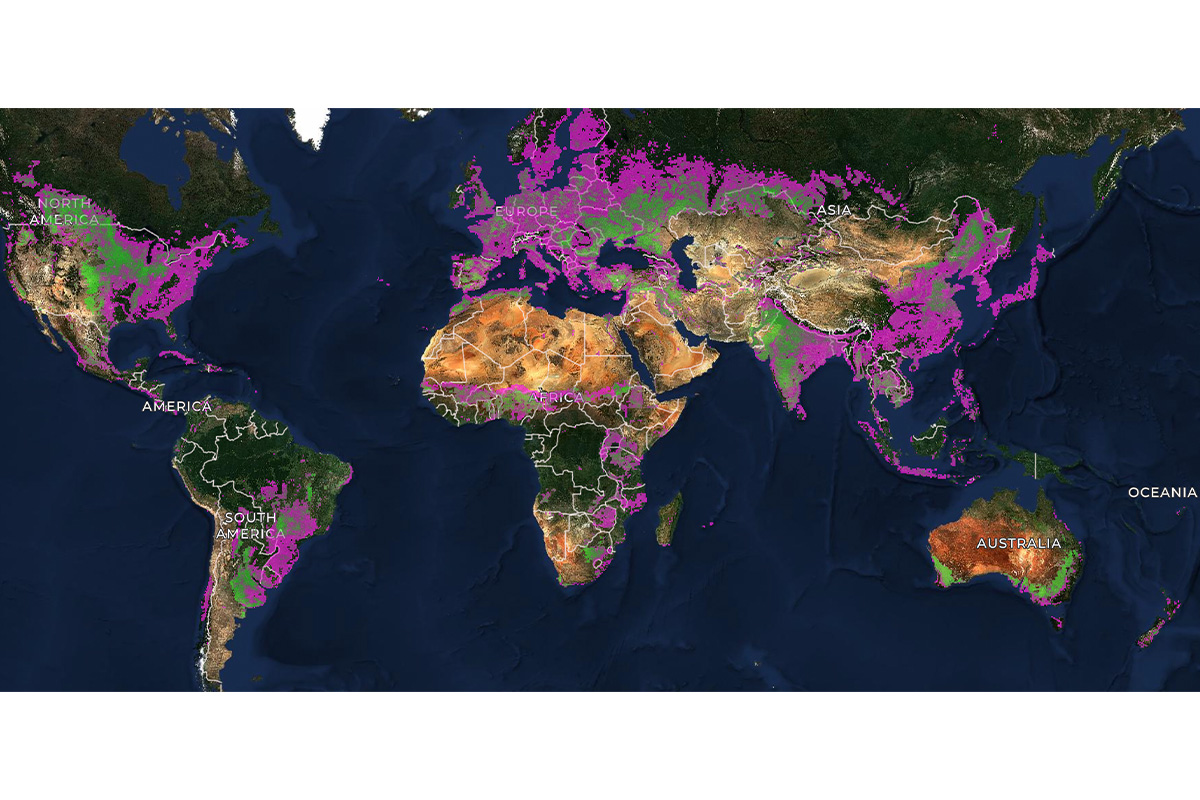

Nearly half of the world's habitable land is used for agriculture, according to the World Economic Forum. Now, for the first time, every agricultural field on Earth has a boundary on a map, thanks to a novel model designed to infer field boundaries all over the world.

Nathan Jacobs, professor of computer science in the McKelvey School of Engineering at Washington University in St. Louis and co-director of the WashU Geospatial Research Initiative, was part of a collaboration of academic and industry researchers who developed the model, which was funded and co-developed by the Taylor Geospatial and Microsoft AI for Good Lab. Their goal was to make geospatial artificial intelligence (GeoAI) publicly available worldwide.

Agricultural field boundary maps that define individual farm plots are important to monitor agriculture and help in making decisions, as well as mapping types of crops, estimating yields, surveilling for pests and diseases, and tracking programs. Having updated maps would be a boon to agriculture and to food security worldwide, researchers say, but doing so is not a simple task due to variation in field size and type worldwide. However, the model captured them all.

The model, Practical Recipe for Field Boundary Segmentation at Scale (PRUE), is published in a preprint for the IEEE/CVF Computer Vision and Pattern Recognition Conference (CVPR) in June 2026.

“Over the past few months, we've been running the algorithm at a global scale and making it accessible to a wide range of users,” said Jacobs, assistant vice provost for digital transformation and director of the Multimodal Vision Research Lab at WashU. “In late April, we released the world's first global dataset of agricultural field boundaries. It's globally available at a 10m resolution.”

The maps provide the first globally consistent field-level unit of analysis for crop monitoring, food security and downstream agricultural science, the collaborators said.

Jacobs has been working with Taylor Geospatial for the past few years on this project with collaborators including Hannah Kerner at Arizona State University; Lyndon Estes at Clark University; Caleb Robinson, Microsoft AI for Good Research Lab; Isaac Corley, previously at Wherobots and now director of AI/ML Research at Taylor Geospatial; Source Cooperative; Wherobots; and other Taylor Geospatial Technical Fellows. The team also produced the training dataset needed to create the model, called Fields of The World, which covers 2024 (1.62B polygons) and 2025 (1.55B polygons), produced by running the PRUE model worldwide.

The model, which covers 241 countries and territories, outperformed 18 other models against the Fields of the World benchmark. Country-scale field boundary maps for Japan, Mexico, Rwanda, South Africa and Switzerland are publicly available.

Researchers say their results show the real-world benefits of PRUE over other models and that their maps are more accurate and reveal important landscape change.

Now, the team is partnering with NASA Harvest, the Food and Agriculture Organization of the United Nations and other global and regional partners to provide the dataset to food security analysts, climate researchers and agricultural development organizations worldwide.

Muhawenayo G, Robinson C, Khanal S, Fang Z, Corley I, Wolam A, Gao T, Strnad L, Avery R, Estes L, Tárano AM, Jacobs N, Kerner H. PRUE: A Practical Recipe for Field Boundary Segmentation at Scale. IEEE/CVF Computer Vision and Pattern Recognition Conference (CVPR) in June 2026. Preprint: https://arxiv.org/abs/2603.27101

The model code is available at https://github.com/fieldsoftheworld/ftw-prue

Robinson C, Muhawenayo G, Khanal S, Fang Z, Corley I, Tárano AM, Estes L, Marcus J, Jacobs N, Kerner H, Becker-Reshef I, Lavista Ferres JM. The first global agricultural field boundary map at 10m resolution. https://www.microsoft.com/en-us/research/wp-content/uploads/2026/04/FTW_Global_Map_v1.1.pdf